Biden executive order on sensitive personal information does little for now to curb data market – bu

The dangers posed by the largely unregulated commercial data market prompted the Biden administration to try to prevent adversarial countries from exploiting Americans’ sensitive personal data.

The Biden administration has identified “countries of concern” exploiting Americans’ sensitive personal data as a national emergency. To address the crisis, the White House issued an executive order on Feb. 28, 2024, aimed at preventing these countries from accessing Americans’ bulk sensitive personal data.

The order doesn’t specify the countries, but news reports cited unnamed senior administration officials identifying them as China, Russia, North Korea, Iran, Cuba and Venezuela.

The executive order adopts a simple, broad definition of sensitive data that should be protected, but the order is limited in the protections it affords.

The order’s larger significance lies in its stated rationale for why the U.S. needs such an order to protect people’s sensitive data in the first place. The national emergency is the direct result of the staggering quantities of sensitive personal data up for sale – to anyone – in the vast international commercial data market, which is comprised of companies that collect, analyze and sell personal data.

Data brokers are using ever-advancing predictive and generative artificial intelligence systems to gain insight into people’s lives and exploit that power. This is increasingly posing risks to individuals and to domestic and national security.

I am an attorney and law professor, and I work, write and teach about data, information privacy and AI. I appreciate the spotlight the order puts on the dangers of the data market by acknowledging that companies collect more data about Americans than ever before – and that the data is legally sold and resold through data brokers. These dangers underscore Congress’ failure to protect people’s most sensitive data.

Sensitive personal data can be fodder for blackmail, raises national security concerns, and can be used as evidence for prosecutions. This is especially true in this era of misinformation and deepfakes – AI-generated video or audio impersonations – and with recent U.S. federal and state court rulings that permit states to restrict and criminalize private personal choices, including those related to reproductive rights. The executive order seeks to protect Americans from these risks – at least from those countries of concern.

What the executive order does

The order issues directives to federal agencies to counter certain countries’ continuing efforts to access Americans’ bulk sensitive personal data as well as U.S. government-related data. Among other concerns, the order emphasizes that personal data could be used to blackmail people, including military and government personnel.

Under the order, the Department of Justice will develop and issue regulations that prevent the large-scale transfer of Americans’ sensitive personal data to countries of concern.

More broadly, the order encourages the Consumer Financial Protection Bureau to take steps to boost compliance with federal consumer protection law. In part, this could help restrict overly invasive collection and sale of sensitive data and reduce the amount of financial information – like credit reports – that data brokers collect and resell.

The order also directs pertinent federal agencies to prohibit data brokers from selling bulk health and genomics data to the countries of concern. It recognizes that data brokers and their customers are increasingly able to use AI to analyze health and genomics data and other types of data that do not contain individuals’ identities to link data to particular individuals.

Defining sensitive personal data

From an information privacy standpoint, the order is significant for its broad definition of what constitutes sensitive personal data. Included in this umbrella term are “covered personal identifiers, geolocation and related sensor data, biometric identifiers, human omic data, personal health data, personal financial data, or any combination thereof.” Not included in the definition is any data that is a matter of public record.

The broad definition is significant because it affirms a departure from the U.S. legal system’s standard approach to data, which is sector by sector. Generally, federal and state laws protect different types of data, like health data, biometric data and financial data, in different ways. Only the people and entities within those sectors, like your doctor or bank, are regulated in how they use the data.

That piecemeal approach is not well suited to the era of satellites and smart devices, and has left much data, even very sensitive data, unprotected. For instance, smartphones and wearable devices and the apps on them sense, collect, use and disseminate vast quantities of highly revealing health-related data and geolocation data, yet such data is not covered by the Health Insurance Portability and Accountability Act or other data protection laws.

By bringing these historically different categories of data under the broader and more easily understood phrase “sensitive personal data,” policymakers in the executive branch have taken a cue from the Federal Trade Commission’s work to protect sensitive consumer data. The FTC has ordered some data brokers to stop selling sensitive location information about individuals. The order also reflects policymakers’ increasing understanding of what’s required for meaningful data protection in the era of predictive and generative AI.

What the executive order does not do

The executive order specifies that it does not seek to upend the global data market or adversely impact “the substantial consumer, economic, scientific and trade relationships that the United States has with other countries.” It also does not seek to broadly prohibit people in the U.S. from conducting commercial transactions with entities and individuals in or “subject to the control, direction or jurisdiction of” the countries of concern.

Nor does it impose measures that would restrict U.S. commitments to increase public access to scientific research, the sharing and interoperability of electronic health information, and patient access to their data.

Notably, it does not seek to impose a general requirement that companies have to store Americans’ sensitive data or U.S. government-related data within the territorial boundaries of the U.S., which in theory would provide better protection for the data. It also does not seek to rewrite the 2023 voluntary Data Privacy Framework for transfers of data between the European Union and the U.S.

In sum, it does little to change U.S. commercial data brokers’ activities and practices – except when such activities involve those countries of concern.

What’s next?

The various agencies directed to act must do so within clearly specified time periods in the order, ranging from four months to a year, so for now it is a waiting game. In the meantime, President Joe Biden has joined a long list of people who continue to urge Congress to pass comprehensive bipartisan privacy legislation.

Anne Toomey McKenna is Co-Chair of the Institute for Electrical and Electronics Engineers (IEEE)-USA's Artificial Intelligence Policy Committee (AIPC), which involves subject matter and education-related interaction with U.S. Senate and House congressional staffers and the Congressional AI Caucus. McKenna has received funding from the National Security Agency for the development of legal educational materials about cyberlaw and funding from The National Police Foundation together with the U.S. Department of Justice-COPS division for legal analysis regarding the use of drones in domestic policing.

Read These Next

Beyond Disney: A 1616 portrait of Pocahontas shows how English colonizers saw Indigenous Americans

The English assumed people they colonized would convert to their way of life, including Protestant Christianity…

You’ve been trying to get around Amazon – but it’s not that easy

For shoppers tying to avoid Amazon, its expansion into shipping and logistics for thousands of companies…

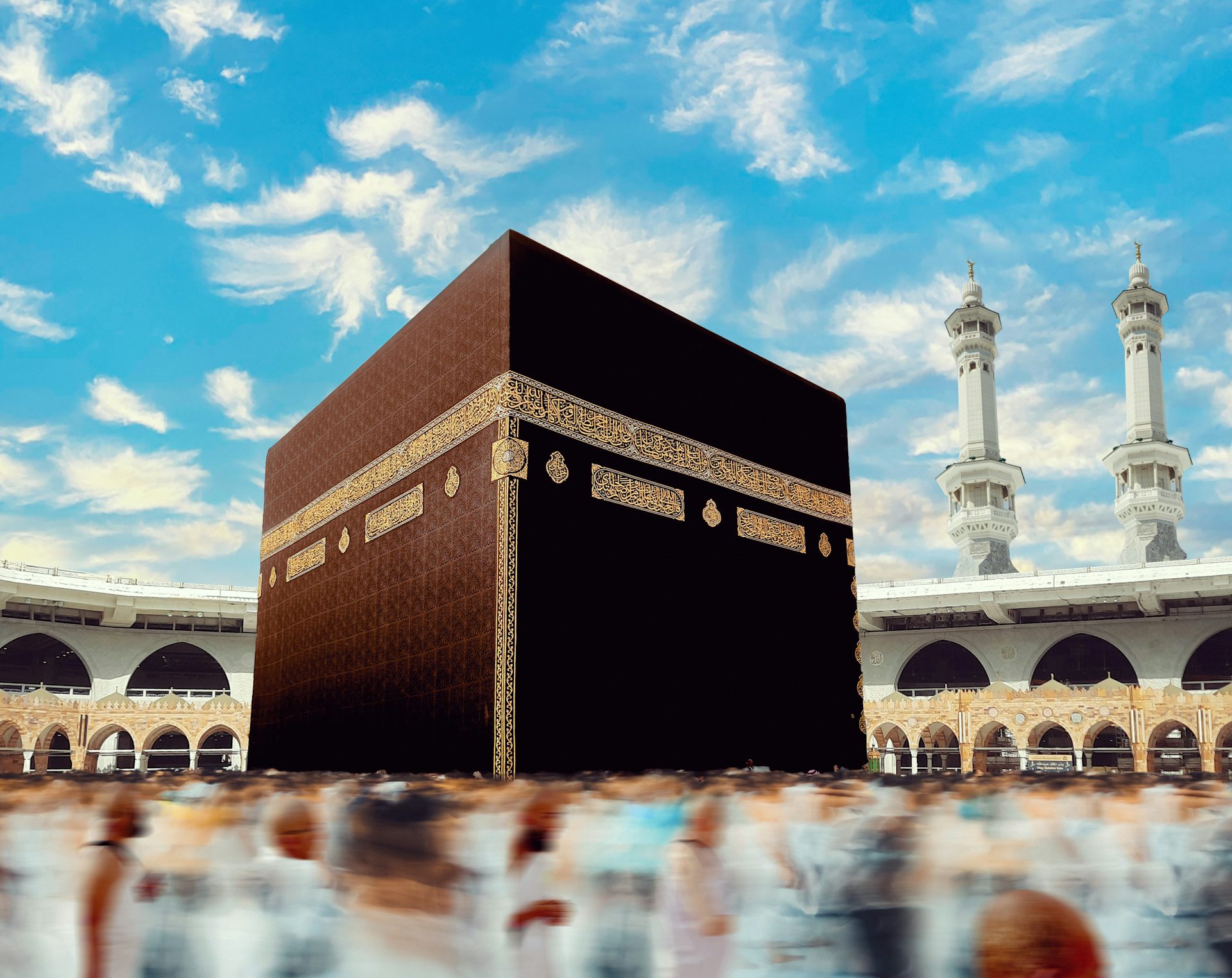

The sacred cloth at the center of the Hajj pilgrimage

As millions gather for Hajj, they will circle the Kaaba, which is draped in the black cloth known as…