Making sense of a chaotic planet: How understanding weather and climate risks depends on supercomput

Behind the long-term climate projections that affect our lives sits one of the most remarkable scientific achievements of the modern era.

Have you ever stopped to wonder how forecasters can predict the weather days in advance, or how scientists figure out how the climate might evolve under different policies?

The Earth system is a vast web of intertwined processes, from microscopic chemical reactions to towering storms. Ocean currents circulating deep in the Atlantic, forests exchanging carbon with the atmosphere, and humans altering the composition of the air all have effects that ripple through the system. These processes are governed by physical laws, such as conservation of mass, energy and momentum.

All of this plays out on such a large scale that no single human mind can truly grasp it in full. And yet, the system is so sensitive that a small perturbation, given enough time, can steer its trajectory in a dramatically different direction. This sensitivity is called “chaos,” also known as the “butterfly effect.” The planet is, at once, immense and delicate.

Despite this complexity and scale, scientists are able to simulate and anticipate how the climate will change.

How is this even possible? Behind the long-term climate projections that affect our lives sits one of the most remarkable scientific achievements of the modern era: climate models that run on supercomputers.

I am a climate data scientist. My colleagues and I try to understand extreme weather and long-term climate risks by using virtual versions of Earth inside these machines.

What a climate model really is

Here is the simplest way to picture a climate model:

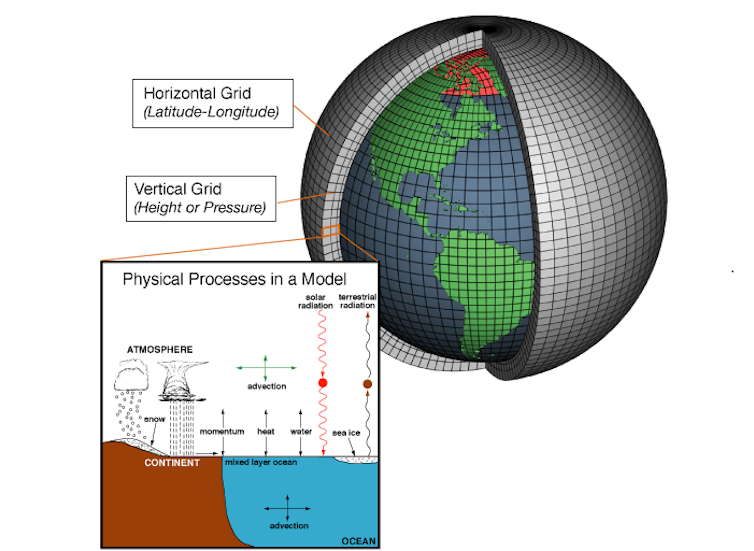

Imagine dividing the entire planet into 3D boxes. At the surface, each box might represent an area 50 to 100 kilometers across. Then we stack boxes upward into the atmosphere and downward into the oceans to create a 3D grid wrapping around the globe.

Each box contains numbers: temperature, wind speed, humidity, sea ice thickness, soil moisture and hundreds of other variables. The model contains mathematical expressions that describe how these variables influence one another: how heat moves, how air rises and sinks, how moisture condenses into clouds, how the ocean absorbs and redistributes energy.

We then let the model march forward in time, solving the math and updating every variable in every box. Then again. And again.

Now scale that up. Millions of grid boxes. Hundreds of variables per box. Calculations carried out millions of times to simulate decades or even centuries.

And because the system is chaotic, we do not run the model just once. We run it many times with slightly different initial conditions – what scientists call an ensemble – to make sure the result is in fact a true system response to the considered scenario, such as warming temperatures due to increased emissions, and not an effect of chaos.

The result is an astronomical number of calculations. Performing them requires computers capable of executing quadrillions of operations per second – what are known as petaflop-scale supercomputers. A petaflop equals 1 quadrillion – 1,000,000,000,000,000 – calculations per second!

From simulation to real-world decisions

These simulations inform decisions that affect everyday life: how high to elevate homes in flood-prone areas, how to design power grids resilient to prolonged heat waves, how to manage water resources during drought.

Urban planners, engineers, emergency managers and policymakers all rely on information derived from these models.

Dozens of major climate models have been developed around the world by universities, national laboratories and government agencies. Each modeling center builds its own code, makes its own physical assumptions, chooses its own grid resolution and operates its own supercomputing systems. Through international efforts such as the Coupled Model Intercomparison Project, modeling centers agree on common experiments: the same greenhouse gas scenarios and the same volcanic eruptions, for example.

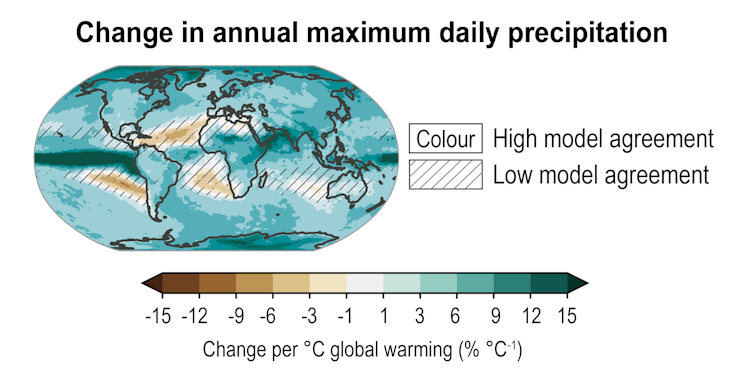

When you hear that extreme rainfall is projected to intensify in a warmer world, or that the Arctic Ocean could become seasonally ice-free within decades, those conclusions are not the result of calculations carried out by a single scientist, a single team of scientists, or even a single model run. They emerge from dozens of independently developed models, run on room-sized supercomputers, under pre-agreed and carefully coordinated experiments.

This global collaboration is one of the reasons scientists know so much about climate change. These shared simulations allow scientists around the world to test hypotheses and explore future risks based on models’ consensus.

It is no surprise that the 2021 Nobel Prize in physics recognized pioneers of climate modeling. These models fundamentally transformed humanity’s ability to understand a complex planet.

There is no alternative way to answer “what if” questions about the future climate system. What happens if carbon dioxide doubles? What if emissions decline rapidly? What if a major volcanic eruption injects aerosols into the stratosphere? Because the climate system is so complex, and forces can push it outside the range of historical experience, the past is no longer a reliable guide to the future. So statistical models aren’t enough.

Artificial intelligence cannot replace this foundation either. AI has made impressive progress in short-term weather prediction, learning patterns from vast historical datasets, and producing forecasts with remarkable speed.

But climate projections require extrapolating to conditions the planet has not experienced in modern history – such as higher greenhouse gas concentrations. AI can accelerate simulations and analyze massive amounts of data today, but it cannot replace solving the physical equations that govern the system.

National supercomputing centers are essential

In the United States, major climate modeling efforts have been supported by national laboratories and federal centers, including NASA and the National Center for Atmospheric Research, or NCAR, along with a few research universities.

At NCAR, scientists developed the Community Earth System Model, a comprehensive climate model that’s arguably one of the best models to date and is used by researchers across the country and around the world to study climate change, severe weather, climate effects on wildfires, and atmospheric patterns. It has helped position the United States at the forefront of climate science and enabled the global research community to tackle some of the most pressing challenges of our time.

Running large ensembles with this model requires powerful hardware, data storage systems capable of handling petabytes of output, and engineers who keep these systems operational. This is not a matter of downloading and running a program on a laptop. It is a national-scale scientific enterprise that makes NCAR and its supercomputer essential.

In a warming climate, the stakes are high. The ability to simulate the Earth system at scale is one of the most powerful tools humanity has to prepare for the risks ahead.

Antonios Mamalakis does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

Read These Next

How businesses with ties to Jeffrey Epstein saw norms – and even share prices – suffer

The more Epstein-connected directors a company had, no matter its size, the more likely it was to have…

Building more renewable energy sources means rethinking land use for agriculture and conservation

Generating solar power requires a lot of land – but which land should it be? And what else can be…

What Pennsylvania’s AI chatbot lawsuit teaches us about the psychology behind medical trust

A Carnegie Mellon researcher explains the connection between our brains and AI chatbots – and what…