If using ChatGPT is cheating, what about ghostwriting? The old debate behind a new panic

Has our culture’s begrudging acceptance of ghostwriting paved the way for everyone – not just the rich and famous – to offload the hard work of writing?

In February 2023, a little more than a year after the launch of ChatGPT, Vanderbilt University sent an email to its student body in the wake of a fatal campus shooting at Michigan State.

“The recent Michigan shootings are a tragic reminder of the importance of taking care of each other,” the email read in part. In tiny type at the bottom of the message, a disclaimer appeared: “paraphrased from OpenAI’s ChatGPT.”

Students immediately objected.

“There is a sick and twisted irony to making a computer write your message about community and togetherness because you can’t be bothered to reflect on it yourself,” one senior wrote.

A Vanderbilt apology email quickly followed. The university launched a professionalism and ethics investigation. One associate dean couched the misstep as a result of learning pains tied to the adoption of new technology.

Chatbots have spawned a host of ethical questions about writing assistance for teachers, students and authors.

But similar debates about ghostwriting have been taking place for over a century, revealing a persistent discomfort with the idea that the words we read might not belong to the person whose name is attached to them.

Outsourcing authorship

Ghostwriting, a paid arrangement in which one person writes under another’s name, has existed for over a century.

The term seems to have first appeared in the English language in a 1908 newspaper article, which I encountered while researching my forthcoming book, “Ghostwriting: A Secret History, from God to A.I.” The story appeared in the Daily Star, in Lincoln, Nebraska, and describes an anonymous writer who earned US$5,000 to help a high-society woman write a book.

Today, ghostwriting usually involves collaborations between professional writers and celebrities or professionals who otherwise wouldn’t have the time, skill or connections to write a book.

On publication of the manuscript, the ghostwriter is typically named, albeit obliquely – perhaps identified as a friend or consultant in the acknowledgments section. In some instances, the ghostwriter’s name appears alongside the credited author’s on the cover. Either way, the client assumes ownership of the ghostwriter’s work.

An ethical gray area

And yet when I type “the practice of one person writing in another person’s name” into Google, the search engine doesn’t spit out “ghostwriting.”

My first hit is “pseudonym” or “alias.” “Plagiarism,” “libel” and “slander” aren’t far behind. A 1953 article titled “Ghost Writing and History” that appeared in The American Scholar also points out that in the mid-20th century, “forgery” – falsely imitating another’s work with the intent to deceive – and “ghostwriting” could be used interchangeably by scholars.

In other words, even when consensual and compensated, ghostwriting has some relatives that are ethically suspect. And maybe that’s why many clients obscure the fact that they’ve used a ghostwriter, and why responses to ghostwritten works often reflect uneasiness with the practice.

“You should be ashamed,” read one social media post, written in response to Millie Bobby Brown’s 2023 debut novel, which she co-wrote with a ghostwriter. “[The ghostwriter’s] name should be on the cover. She was the one who actually wrote the book.”

The discomfort goes both ways: “I feel so guilty and ashamed whenever I use a ghostwriter now because I feel people will think I’m lying,” an anonymous poster on Reddit admitted.

Both the criticism and self-flagellation imply that the act of claiming another person’s words can render these words deceitful, even if the words have been paid for and the content is true.

Ghostwriting agencies rush to defuse these worries. Ghostwriting has been around forever, the Association of Ghostwriters reassures its clients. Ghostwriting is consensual and collaborative – not lazy, deceptive or a form of “selling out,” an author who’d recently used ghostwriting services explained.

And yet, in the last chapter of her ghostwritten book, Whoopi Goldberg acknowledges some misgivings about using a ghostwriter.

“I meant to try (to write the book myself),” Goldberg writes. “And when it turned out I couldn’t quite pull it off … I looked for help.”

Goldberg frames the assistance of ghostwriting as something she deserved after overcoming obstacles as a Black woman. But Goldberg also has financial resources available that others looking for writing assistance usually don’t. High-end ghostwriters collect in the mid-six figures for their services; Prince Harry’s ghostwriter, J.R. Moehringer, supposedly scored a $1 million advance.

Cue chatbots. Generative AI promises to be the ghostwriter for the masses, so much so that ghostwriter Josh Lisec explained to me how, in the future, ghostwriting will need to be marketed as a boutique service for elites if it is to survive.

Naming names

Whether you’re paying for a ghostwriter or using a free chatbot, “assistance” or “collaboration” on intellectual and artistic work is not automatically unethical.

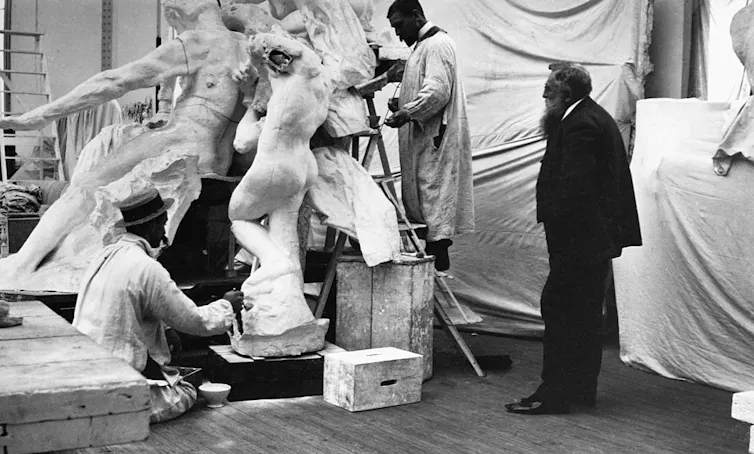

Editors have long made a career out of helping authors shape their writing. Visual artists have long employed studio assistants. Television shows only get written collaboratively in writers’ rooms.

And yet, accepting assistance on intellectual or artistic work can raise legitimate questions, particularly with regards to how that assistance is acknowledged and how much assistance can be accepted while still calling a project “ours.”

In the late 19th century, for example, one sculptor went to court to rebut a claim that his assistant – whom the press referred to as a “ghost” – had completed sculptures for which the sculptor took credit. The judge announced that an artist could accept, with integrity, a certain amount of mechanical assistance. But he added that there was a threshold when artistic assistance became “dishonest.” The judge made the accused sculptor craft a bust in real time to prove his skill.

Similarly, most educators find it more ethical when their students turn to ChatGPT for editing assistance but much less so when they use it to generate a document from scratch.

Many universities now allow AI as a tool but require users to verify its accuracy and disclose its use – rules that echo long-standing ghostwriting contracts.

Yet even verified, A.I.-generated text, if claimed solely as an individual’s work, can pose policy violations at my institution, the University of Southern California: “You should never attempt to present … content created by others, including generative AI, as your own.”

The same policies that govern appropriate A.I. use also come up in ghostwriting contracts. The ghostwriter signs a “warranty of originality” that promises the author that the ghostwriter has – via platforms such as iThenticate – fact-checked and plagiarism-checked their work.

When inaccuracies do crop up, ghostwriters often take the fall.

Former Department of Homeland Security Secretary Kristi Noem blamed her ghostwriter for indicating in her memoir that she had met North Korean dictator Kim Jong Un. Physician David Agus, who teaches at the University of Southern California Keck School of Medicine, held his ghostwriter responsible for the many instances of plagiarism that were identified in his popular science books.

Ghostwriters willingly provide assistance and accept responsibility for the originality of what they write. Scholars have permission to use generative AI, provided they properly cite its use.

And yet when Vanderbilt administrators advertised that their email had been written with the assistance of ChatGPT, students and faculty pushed back.

University policies and book contracts may offer veils of legitimacy and shields from legal liability. But in the end, readers still seem to want the words they’re reading to come from the mind of the person whose name is on the byline.

Emily Hodgson Anderson does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.

Read These Next

What’s wrong with how US and Uganda plan to stop Ebola spreading

Geography may not provide meaningful protection once an outbreak is already underway.

I’ve been studying racist costume parties for a decade, and colleges are failing at educating the st

A series of racist costume parties at Bowdoin shows the contradiction colleges have to navigate –…

‘Debate me!’ doesn’t work. Here are better ways to disagree – and maybe change minds

Other communication techniques can help move disagreement past attacks and sound bites.